IT Infrastructure Monitoring

Behind the Software Q&A with Opengear CEO Rick Stevenson

Company networks are getting larger and larger, but that doesn’t necessarily imply that the same is true for IT staff (or IT budgets). These days a company might multiple sites spread across a huge geographic area, but only one centralized IT team to address the needs of every branch. In this Q&A with Opengear, we talked to CEO Rick Stevenson to learn how his company is combining expertise in IT software and hardware to address the challenges of remote IT management.

For more information on Opengear and their products be sure to check out the company website.

The first question is an obvious one. How did Opengear get started? To be more precise, what were the specific business needs present at the time that the company set out to address?

Just a brief history: the folks behind Opengear–we all worked together at previous companies. We actually had a company called SnapGear, which was acquired. After that happened, a few of the guys went off to do something new, which was the genesis of the company. So you have the team who wanted to work together again.

I’m not sure we actually had a particular problem in mind when I started it. We had some talent. We had done this a couple times before. We are good at leveraging open source software. We are good at building modern hardware appliances. I guess we looked for something to do rather than have an inspirational moment. While looking we decided that console servers looked like an interesting place from which to start building a business, and from there look at more interesting opportunities. The thing that stood out to us after a while is that remote management is becoming a difficult problem, it might be a place where appliances have a good place. In our case, it’s kind of become the hardware to some extent. Of course, the software is the most important part of any hardware appliance these days.

That dovetails into my next question. You’re involved in both the hardware and software side of data center management. Is there one that you defined as the focus of Opengear as a business? Or is it 50/50 split?

The data center is kind of the old world. It’s a little bit more staid and stable and well defined. It was a large part of our business to begin with.

These days I think that remote management is a much more interesting opportunity in terms of growth. Data centers are here to stay, of course, but they’re a relatively stable sort of thing. It’s not growing at huge compound annual growth rates; whereas remote management is a much bigger opportunity, and that’s where a lot of our focus is.

Does having your feet in both the hardware and software side affect the way that you do business at all? For example, if you focus only on one element, does it present any particular challenges or advantages that you otherwise wouldn’t have?

Certainly it does provide some challenges. Putting together a software company isn’t easy, but adding the dimension of hardware as well requires a bunch of skills, contacts and experience. Hardware manufacturing is kind of a pain to do. You need to have a good reason to do it. In our case it’s to get to the right price point on the hardware. You really need to do it yourself to do that rather than use OEM. The big advantage is that you can build something at that price point, and you can build an autonomous device.

In a remote situation we don’t know what’s working and what’s not working. Running management software on a PC or server at the remote sites is actually problematic because that may be that a device that’s faulty. That gives you a place outside the network to monitor and access from and allows you to build a bunch of smarts into the edge, which, rather than concentrating the smart stuff in the server, the monitoring system can actually distribute that intelligence and produce a good result hopefully.

Could you define remote management? What entails remote management versus the traditional ways of management?

The complexity of systems is increasing and continuing to increase, and as a result systems are becoming more fragile. I don’t know if you’ve ever heard of Glass’ Law of Complexity. It claims that every 25 percent increase the functionality gives you a 100 percent increase in complexity, which is a simplification.

At the same time, though, we are depending a lot more on external things. So we’re depending on the Cloud for the day-to-day applications. We are depending on mobile devices and cellular networks. It’s becoming harder and harder to maintain reliability. In the days when everything was concentrated in the data center, we had five nines reliability. That’s not really feasible anymore because of the number things that are involved. At the same time, the cost of failure increases. These days if the Cloud is down for a day, it’s a nightmare. But previously you had internal systems. You could generally keep things running. It’s becoming less reliable and more of an issue when they are less reliable.

There are also cost pressures. People don’t have IT staff everywhere anymore. Our solution to that is the ability to detect and fix faults as rapidly and cheaply as possible rather than trying to prevent faults from happening, which you do anyway, but you can’t just rely on that. So we provide the ability to detect when things go wrong and fix them. Regardless of whether it’s a local or a remote fault, we don’t think that there should be any difference in how you handle it. Remote management is all about having remote sites. A company with lots of offices won’t have IT people at every site. Every site will be connected to the Cloud or to a VPN. It will have a bunch of equipment in the closet somewhere, which is providing a lot of the infrastructure that the branch needs. Then there’s no one locally who could fix those.

What we do is give you an appliance that you can put at that site, which allows your centralized IT people or your managed service provider (MSP) to see what’s going on at the site and to sort out configurations, fix things when they go wrong and work around problems. We do that out of the company band, so obviously if a catastrophic failure happens, if your ISP goes down or your main router crashes, we provide an out-of-band secondary path into that site – usually via cellular these days. So you can get in and fix things easily in the networks.

One of the network trends we hear about a lot is bringing your own device (BYOD). Is that something that you have integrated into your strategy when you are servicing your clients?

We do in terms of allowing access via cell phones and tablets and things, because IT managers may need to fix something at midnight when he is at home for example. In that sense we intersect directly with bringing your own device, except that’s another issue with respect to the complexity of systems. It feeds into the whole issue of complexity and resilience.

Last month, Gartner included you in their report on the alignment and integration of IT and operational technology. What does the integration of those two fields provide to enterprises? How is Opengear taking that integration into account going forward?

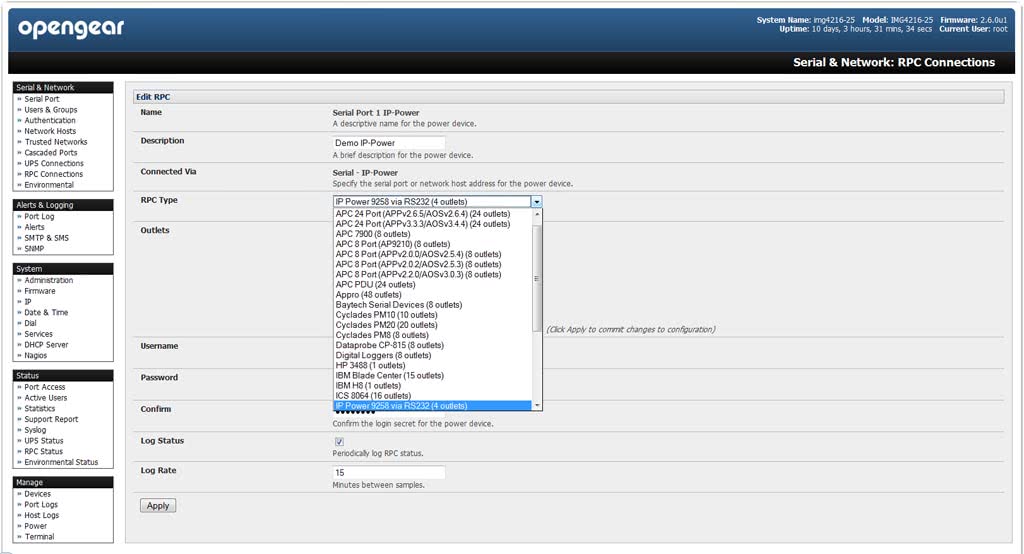

The Gartner report was about the convergence of IT and Operational Technology (OT), which is one of their things. The IT devices that we typically manage are things like routers, switches, firewalls, servers, power infrastructure–things like UPSs (uninterruptable power supplies) and PDUs (power distribution units)—and communications gear in general. That’s your traditional IT gear, and we are well set up to manage that.

We manage that at a console level. We talk through service processors and console ports so that you can do things with those devices even when potentially they are partially malfunctioning or misconfigured. Operational technology is all about managing facilities, HVAC systems, security systems and even industrial systems. It’s all the other stuff that’s traditionally been handled by a different group from the IT people. So they have owned that and gotten to believe that those two things are converging in the future, meaning it will all be the responsibility of one group. Normally the interfaces we used to talk to IT gear are used to speak to those sorts of devices as well, but we’ve also incorporated some digital I/O and interfaces that are specific to those non-IT operational technology devices.

We give you a single platform where you can manage both those things. To an extent whether the politics of the organization are such that those two groups are merged or not doesn’t really matter. They at least have a central access point to all that gear. But at some level IT is becoming a basic commodity alongside things like air conditioning and power. The other thing we do is that we have the ability to monitor environmental sensors as well. We can keep track of the temperature, humidity. We can detect small leaks, slope, vibrations. You can also connect things like IP cameras to our device, so we can cover a lot more than just the traditional IT gear.

We’ve heard about that kind of integration between facilities management and IT management under the whole in the form of Data Center Infrastructure Management (DCIM). It’s interesting that it’s also being extended to remote management.

Yeah, we have some really interesting examples. One example is we have a motorway built here recently. They have fiber connectivity up-and-down the motorway to talk to road signs and all sorts of gear. They put in a bunch of monitoring stations along the motorway to keep an eye on the health of things and to store all the IT equipment. They put our devices in there as well, and there was a flood earlier this year. They weren’t able to actually access any of these boxes physically because of the flood, but they could keep an eye on water levels and check the power levels to see how batteries were going. They managed everything through that flood and got everything done remotely. It was actually pretty cool.

Are there any specific types of enterprise or organization that seek this kind of technology out? If so, has that affected your business strategy at all?

The devices have a horizontal appeal. They’re something that anybody with IT equipment, particularly with remote offices, might need. But the early adopters are typically the people who really can’t afford network downtime. In particular, we see a lot of interest and implementations in financial companies, telcos, insurance–the guys for whom if their Internet connection is down or their apps are down, their main business is down.

One thing I noticed about Opengear right off the bat is that you have a really experienced management team. How has that pool of talent at the management level and also on the board affected your operations – especially internationally?

Yes, it’s made it easier to bootstrap the way we’ve done things. We’ve all made lots of mistakes in the past. We’ve managed to avoid some of those at Opengear. It’s good having that experience there to bounce ideas off people, and generally we’ve built the company in a reasonably effective way. You always run into new problems and make new mistakes, of course. Despite that I think we’ve gone about it in a fairly measured way.

We haven’t been trying to do something which is world beating with either huge success or crash and burn. We’ve been relatively conservative, and built the business in a more measured manner. We think that gave us a much greater chance of success and longevity. It’s all there partly because we’re mostly engineers on the team. We have that sort of view on life. It’s a good and a bad thing.

A lot of the people that we’re dealing with are relatively conservative as well. We work with a lot with network administrators and IT guys, and they’re not the sort of people that take risks with new technology.

Usually in those industries when you hear something described as “drastic change,” it’s not typically associated with good things.

That’s right. That’s also our experience with open source. We contribute to a bunch of open source projects and have done so in our previous lives as well. That allows relatively small companies to start up and start building significant products without a really huge investment in time that you need to build a proprietary system from scratch.

How has this industry changed since the guys that started in 2004?

I think the biggest change technology wise has been the rise of cellular. We had to cross over maybe a year ago where sales of modem-based products was eclipsed by the sale of cellular products. That’s accelerating really rapidly. The advent of dirt cheap data plans had made a big difference. The convenience of cellular–that’s a big technological change.

With the economy a little poorer for a while we saw people being a little more willing to try something new in terms of management. There was also some pressure to do more with fewer resources IT-wise. That’s something that plays to our strengths. People are trying to wrangle up more efficiencies. As such there’s a lot more interest in monitoring data center equipment and the overall management.

The way it’s typically spun here is turning the data center, or IT in general, into a cost-saving center. I’m not so sure how realistic that is.

Yes. It’s probably little bit of wishful thinking. The whole reliance on the Cloud is obviously a major trend that’s affected us as well. The naïve view might be that going to the cloud means you don’t have to worry about these things anymore, but in reality everybody has a connection to the cloud. That’s now a critical resource.

What’s the biggest challenge that you are currently facing? That could be either business or technology. How are you addressing that?

Probably one of the biggest technical challenges we face is the fast pace and cost of integrating cellular technology. It’s changing all the time, the modules and the base technology all change rapidly. There are also a bunch of standards that are changing. There are a lot of issues with global compatibility, and then there’s the regulatory regime in cellular.

Regulation means that it’s quite expensive and difficult to get the devices approved for use on the cellular networks. That’s partly because the cellular networks are still very much focused on mobile handsets. So the rules that apply for handsets don’t necessarily make sense for a racked device, but you still need to jump through the same hoops. The carriers also all have different rules and regulations. Data plans are evolving quite quickly as well. That’s something that really keeps us on our toes. In terms of addressing it, it’s really just a matter of keeping a close eye on what’s going on; keeping your feelers out and working with people who know their way around it.

There’s one other thing that I think is something of a challenge as well. That’s the general immaturity of the management solutions and the turn that’s going on in the management world. There’s still a lot of incompatible proprietary systems, and the standards are evolving pretty slowly. A lot of stuff is still based on Simple Network Management Protocol (SNMP), which is good, stable technology, but it’s pretty old. We find that integration with other systems is difficult, and it’s an area where hopefully things start to change.

Where do you see this industry going in the near future? What kind of challenges and opportunities do you see on the horizon?

I don’t see any sort of dramatic changes. I think it’s more going to be more of the same, and that’s already difficult enough as it is. I think we’re going to continue to become more and more aligned on stuff in the Cloud and more aligned on cellular networks and it will continue to become more important to be able to fix things when they break and to detect when things are going wrong.

Learn more about Opengear and other IT infrastructure monitoring platforms by downloading our side-by-side comparison report of the Top 10 IT Infrastructure Monitoring Software solutions. You can also browse all of our exclusive resources on IT management by visiting the IT Management Software resource center.