Data Center Management

Seeing Green: Using DCIM to Cut IT Power Consumption

There’s more pressure than ever these days for IT to do more than just keep everything online. More people at C-level positions (and not just CIOs) are gaining a better understanding of IT matters and, consequently, adjusting their expectations. Optimization and cost savings have become recurring themes on enterprise IT strategies. Data center infrastructure management (DCIM), takes those themes to heart and makes them central to what it offers to data center managers.

Tools for data center maintenance aren’t anything new, but where DCIM differs is in taking what was previously two different disciplines of data center maintenance, IT management and facilities management, and addressing both of them in the same solution. That means a single piece of software for monitoring and analyzing logic layer functions as well as physical layer concerns like mainframe temperature, airflow and floor plans.

Here’s an outline of some of the ways enterprise networks stand to benefit from DCIM from an energy cost and efficiency standpoint.

Improved Energy Efficiency

Data centers are expensive, and in more ways than one. They take up a huge amount of space and consume a huge amount of energy. It’s only through diligent monitoring–one of the core DCIM functions–that an organization stands a chance of identifying inefficiencies in consumption and prescribing fixes.

Michael Phares, global marketing lead for Cormant, a DCIM vendor, emphasized the importance of monitoring tools as part of any effective DCIM suite:

Visualization via dynamic rack and plan views along with dashboards displaying real time and historical data, meaning that everyone from the CIO down can see precisely the information they require including capacity, trend, efficiency and environmental data.

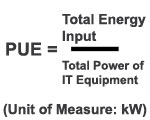

So important is the issue of data center power consumption that a metric for abstracting a data center’s overall energy efficiency was created. Crafted by Green Grid, an IT association and think tank dedicated towards proposing initiatives for better power usage in data centers, the Power Usage Effectiveness (PUE) metric functions on the following equation:

According to 2008 white paper by Green Grid, PUE equal to 1 represents a data center with perfect energy usage; the amount of energy consumed by all the hardware is exactly equal to the amount of energy being piped into the data center. In reality, a PUE value of 1 is far from the norm, with the industry average being closer to 1.8 according to a 2010 study.

One gaping efficiency problem that plagues many data centers is so-called “ghost servers,” or servers that see little to no use, yet continue to occupy space and consume power from the grid. This can happen for any number of reasons, like the brunt of operations migrating elsewhere, but identifying ghost servers has traditionally been a challenge since metrics like CPU usage can still give the appearance of a server being “busy” when in fact it’s only running secondary and tertiary processes. The real time data provided by a DCIM solution allows for the tracking of CPU and memory usage over time to see which servers are running consistently below capacity, at which point the enterprise could either reorganize to distribute its computing more evenly or decommission the superfluous units entirely, saving on rack space and the power needed to run the hardware and keep it cool.

Heat Management

With that power going into the facility and the server processes going on simultaneously, heat management is a major part of the physical layer of data center management. The irony is that the equipment used to keep server equipment at cool temperatures, computer room air conditioning units, liquid cooling and airflow controls among other things, contribute a large chunk of the energy consumption for an average data center.

One of the duties already familiar to many a data center administrator is literally taking the temperatures of each mainframe to make sure nothing is overheating. Working off the same logic, an integral part of the real-time data fed onto a DCIM dashboard is the active monitoring of temperatures across every piece of equipment in the facility.

By using tools like heat maps for individual servers and the floor plan for the entire data center itself, operators have the ability to cut power usage by increasing the efficiency with which cooling equipment is deployed. For example, by tracking patterns in where and how heat spikes happen, operators could conceivably reroute existing cooling equipment to focus on those areas or modify floor plan layout to maximize the airflow on the most stressed units without sinking money into more power-hungry cooling. Additionally, by having a clearer view of what’s going on on both the logic layer and physical layer, operators can save on power costs by simply letting things run X-degrees hotter, where X is a variable determined by much higher server temperatures could go before performance and reliability started to take a hit.

Learn more about IT technologies by downloading our exclusive Top 10 IT Infrastructure Monitoring Software report. You can also browse the top DCIM platforms and other IT solutions on our IT Management resource center page.