PaaS

Behind the Software Q&A with SwiftStack CEO Joe Arnold

As disasters of all varieties — fires, earthquakes, hurricanes, super storms and other natural disasters — increasingly threaten the security of business data archives, it’s becoming important for enterprises to adopt solutions that will effectively safeguard their files. That’s why more companies are turning to software-defined storage models for safer and more affordable data storage. These systems can get up and running much more quickly, provide scalable storage capabilities and safeguard vital corporate data in the event of a disaster.

SwiftStack is one such software-defined storage platform, built on the open source project Swift and featuring a simple yet scalable and secure object storage model. We spoke with SwiftStack CEO Joe Arnold about the recent launch of SwiftStack 2.0, the platform’s value as an SDS and the nature of the open source industry.

Why don’t you start by telling us about how and why SwiftStack was founded. What challenges were you seeing in the industry that existing products failed to address?

Specifically at OpenStack, there’s a project called Swift, which provides functionality very similar to Amazon S3. Swift allowed people to deploy their own versions of a large-scale service provider scale object storage environment. I was fortunate to be involved in the early days of OpenStack, and helped companies like Korea Telecom and Internap get up and running [with scale object storage based on Swift]. There was a lot of heavy lifting that needed to be done in order to get the system up and running and to turn it into a service.

The aha moment was [when we realized] we could build a product around this technology to make it easier for others to get up and running. The super large-scale public clouds like HP, IBM, Rackspace, even Oracle were all big users of Swift, but we asked how can we bring this to enterprises? How can we make it really simple to deploy so it doesn’t require as many people, and how can we enable them to use commodity storage infrastructure? It can be kind of scary to take on commodity infrastructure without the right management tools and without the right software stack that can deal with component failures. So about three years ago, we decided to take the plunge. A group of us that had built different infrastructure management products started building SwiftStack and working with our first batch of customers.

Your platform is based on the open source object storage project Swift. Why did you choose to work with an open source project rather than build a software-defined storage platform from scratch? What role does Swift play in the SwiftStack platform?

SwiftStack CEO Joe Arnold

That being said, we know that companies want to buy products, not roll out projects. Customers really buy into the idea that an open source company needs to earn its keep. Meaning yes, you are consuming open source technology as a product, but at the end of the day that product has to earn its keep and it needs to provide a tremendous amount of value so consumers keep coming back. The SwiftStack product is how we cast open source and make it consumable.

You released SwiftStack 2.0 at the start of the summer – how does this launch help SwiftStack better address object storage needs among enterprises? What notable features did you roll out with SwiftStack 2.0?

Our focus as a company is to have a product that makes it really easy to consume open source software and commodity storage infrastructure. That’s our job. If we can make that easy to deploy, maintain, upgrade, scale up, add capacity — we’re doing our job. I’ll highlight three notable features that we rolled out as part of 2.0. The first is a file system gateway, which enables people to use existing applications that would normally talk to a file system and put that data into the object storage. What’s unique about our approach is that we built it so that an object can go in, and then you can get it out via the file system gateway. Or conversely, a file can go in through the file system gateway, and then a user can take that out via an object. This really enables people to transition to a large-scale object stored over a period of time without having to change every single one of their applications to speak a new storage protocol.

The second thing that we did was — in the spirit of integrating more and more into enterprises — we rolled out active directory and LDAP integration. This makes it so that when large enterprises are building out a storage as a service and rolling out their own private cloud infrastructure, they don’t necessarily need a provision account for every single person in their organization. They can just do that integration, and suddenly everyone can use that storage and service internally in the organization. Then they can also do things like chargeback, because one of the other things that we do at SwiftStack is provide utilization information about each account. So this feature ties all of those things together and just makes it really easy for users to roll out a storage service internally.

The third thing is something called storage policies. Storage policies allow customers to deploy a multi-data center storage footprint, so they can choose which data centers and which regions can be included in different storage policies. This allows the storage policy to be used for lots of different purposes. For example, you can have a storage policy for disaster recovery that places data in multiple locations, or if you’re doing a backup, you can have a backup stored in one location on a frequent basis and then periodically sort it into a storage policy that goes to multiple data centers. This gives a lot of flexibility in how people can deploy the storage environment.

If you could only choose three words to sum up SwiftStack’s value as an SDS, what would they be?

I’m going to say simple, cost-effective, scale.

Can you explain why you’ve chosen those three words?

I chose simple because that is the first aha moment that people have when using our software. Users are conditioned to feel like, “okay, we’re about to use open-source software on white box equipment — let’s roll up our sleeves, put on our hard-hat.” They’re bracing themselves for a lot of work that they have to commit to in order to get the system up and running. Once they experience a product built around the technology, they realize how simple it is and that it’s not something to be scared of. Even though the product does involve open source software and commodity hardware, it’s going to be in their control.

“The whole solution is built around not having any single point of failure.”

The second word is cost-effective. Because we’ve put a lot of emphasis around the management and the tools, it makes [using] open source less expensive. Even though you’re purchasing an open source product, it’s a lot less expensive than doing custom development — whether that’s done in-house, where you’re locking yourself into your own knowledge, or whether you’re bringing on other folks from the outside and needing to go back to them if you want to add a capability or put upgrades in place. From a software perspective, not only do we have the leverage of a large and thriving open-source development ecosystem, but we are also able to amortize all of the work that we put into deployment, management and scaling so that software costs really shrink. I think it goes without saying that the hardware costs are also going to be scrunched down a bit. We’ve designed the software so that it can be deployed across different types of equipment, and you don’t necessarily need to install a large footprint that remains static over long periods of time. When you do upgrades, they can be done very incrementally. You can have a strategy of buying what you need when you need it, and that also has a big cost-savings advantage.

Lastly is scale. The whole solution is built around not having any single point of failure in the entire system. Every time we are adding a capability [we think], how is this going to scale? How do we make sure that there are no single points of failure? How do we make the transition easy for people when we’re adding new equipment or new data centers or new hardware? How do we make sure that scaling process works very reliably?

Now that you’ve talked about SwiftStack’s value, can you walk us through an example of when an enterprise would benefit from SwiftStack and how they would go about implementing it?

Backup and archive are where we get a lot of use when we first engage with customers, and the reason why we get slotted in there is because the technology is very well-suited to put data in multiple data centers and can make the backup and disaster recovery processes really simple. For example, we work with a sports video broadcasting company called Pac-12 Networks and [provide] an archive tier for that production network. They use the file system gateway to put data in and out of the storage system (so it blends right in with their existing applications), and when we need to add capacity, they can just plug in more units in order to add that additional capability.

[We have other customers] that are doing virtual machine backups. We are working with a financial services firm that has database backups, virtual machine backups and desktop backups. Each one of those has different applications and a lot of them natively support our object storage API, and when the applications don’t, they use the file system gateway to put data into the system. Backups and archives are [storage needs] that continuously grow and grow over time. When you run out of capacity or your license [renewal] is coming up, people ask “what are the alternative solutions for this? How can we do things in a more cost-effective and scalable way?” That’s when we get called in. For example, another customer of ours had a situation during Hurricane Sandy where he lost the data center, and he really wanted to figure out a multi-site storage solution to make sure that he didn’t lose data in those situations again.

The last use case is that enterprises nowadays more and more are building out new applications [and have a lot of data that needs to be stored]. For example, we’re working with eBay and there are lots of opportunities where data needs to be stored and needs to be served. When we serve that data, it’s going to a web browser or it’s going to a mobile device, and so having a storage system that natively speaks HTTP is a huge advantage — it removes the translation layer and means that we can serve more customers and deal with lots of incoming requests all at the same time. That’s another use case that we are really good at solving.

We recently spoke with Inktank (who has since been purchased by Red Hat) about how they’re utilizing another open source project, Ceph, to transform the software-defined storage industry. How does Swift differ from Ceph, and why did you choose to work with Swift over other open source projects?

If you rewind to a few years ago before the notion of software defined storage, you still saw different storage products that would satisfy different workloads. That also transposes in the world of software defined storage — you see different projects that are focused on different things. What Ceph is focused on is different than what Swift is focused on. What’s different about Swift versus Ceph in particular is that Swift is focused on unstructured data.

“Swift is focused on being able to ingest data, store data across sites and deal with high concurrency.”

Specifically, Swift is focused on object storage and being able to ingest data, to store data across multiple sites and to deal with high concurrency. We can take data in, and then we can reconcile that data even after that data has been ingested. We see that [type of storage] being used with backups, archives, videos and documents. Where we do not see this being used is with databases or running virtual machines, and that is because we opt out of having a strongly consistent data store in order to get the benefit of being able to scale more broadly and distribute data more widely. Where we see Ceph being used is for running virtual machines. Then there other use cases where other storage products coming to play.

How does the collaborative environment of an open source project benefit industries such as object storage? What are your thoughts on the role of open source communities in software development? Do you anticipate these communities will become more prevalent across the board, or do you believe there are certain segments of software that better lend themselves to this type of communal construction?

Asking what role does open source have to play in what categories of software is a really great question. It’s asking the question, is data center infrastructure a unique solution that open source is particularly good at solving? I think the answer is yes. I think when you have a product whose target user is something technical, that’s where open source can thrive because that’s where you can build a large ecosystem. The reason why you can have that large ecosystem built is because the users of the software can also contribute and form a community as well. In very few other industries is that really the case. For example, if you’re using a word processor, the users of that software aren’t people who can go and contribute to it. But when it comes to data centers and data center infrastructure or databases or big data, you see open source thrive because someone who is consuming and using that software can also participate in some way with the development of that software. I think we’re in a golden age of open source when it comes to the data center.

Transitioning back to SwiftStack, what can users expect to see in the new year? Are there any new capabilities you’re working on or would like to see incorporated into the SwiftStack platform that have not yet been developed?

There’s one feature on our immediate horizon that we are actively working on with the community, and that’s having data protection strategies, which reduce the amount of hardware that is needed in order to have the same durability properties. This feature is going to be really great with large-scale archiving projects. We are going to be able to very efficiently reduce even further the amount of costs that it will take to store a certain amount of data.

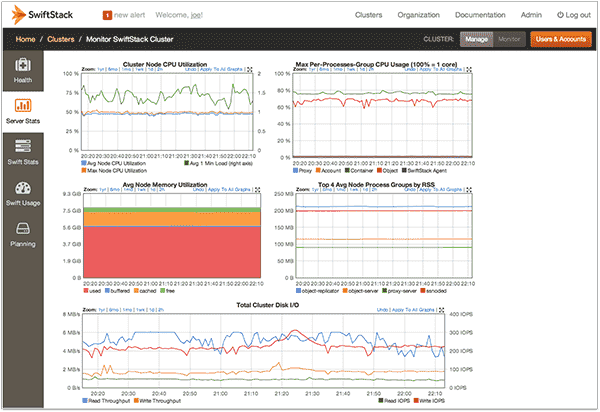

All of this is going to be enabled by the SwiftStack Controller — a software-defined storage controller. This controller model, which can oversee a distributed system, provides a new way for people to consume open source. The benefit of the controller is that the system can have no single point of failure, and all it can control can be done from that one central point. What we’ve done at SwiftStack and are rolling out right now is the ability to manage that infrastructure as a service so that the commodity storage equipment can be on customer premises — it can be in someone’s data center — and it can be managed remotely as a service. It’s a great way for people to really easily try out SwiftStack.

Browse all of our information on software-defined storage — plus tons of other great content on top software reviews, implementation advice, top features and other best practices — by visiting the Business-Software.com blog homepage.